Made in India language assessment platform

Build interview-ready communication with secure, trust-first testing.

NativeScore is designed for India-focused hiring and academic readiness across BPO, KPO, schools, colleges, and multilingual teams.

Built for India-scale hiring and learning programs

Campaign controls, monitored attempt flow, and certificate trust are connected in one operational design.

Operational surface

- Role-based visibility for admin, supervisor, and HR teams

- Structured screening for schools, colleges, and enterprises

- Evidence-backed outcomes with public verification support

BPO and KPO Communication Screen

Pre-interview communication validation helps service operations improve productivity by hiring role-fit candidates earlier.

Student Interview Readiness

Institutions can run repeated readiness cycles so students are confident in modern online interview assessments.

Verified Certification Lifecycle

Each test outcome can be linked to verifiable certificates for transparent trust among recruiters and institutions.

Visual Highlights

Left-right storytelling aligned with real deployment use cases

Schools and Colleges

Interview readiness starts early for students

Students need practical exposure before placement season. NativeScore helps institutions run guided language assessments so candidates can confidently clear interview filters.

BPO and KPO

Communication quality drives productivity

In BPO, KPO, and service operations, communication skill directly impacts productivity. The platform screens role-fit communication standards before final interview stages.

Make In India Hiring

Regional and global readiness in one workflow

Campaigns can evaluate Hindi, English, and regional language capability under one governance model so teams can hire confidently across multiple markets.

Public Trust

Verification-first outcomes

Each certificate is issued with a unique ID that can be verified publicly. This keeps candidate outcomes transparent for schools, recruiters, and compliance teams.

Training Snapshots

High-definition candidate preparation journeys

Practice Cycle 01

Structured preparation for modern interview filters

Candidates can build confidence with repeat practice cycles that mirror real online assessments, including strict timings and controlled response flow.

Practice Cycle 02

Clear instructions and smoother candidate completion

Guided structure reduces candidate confusion and increases attempt completion quality in high-volume readiness programs.

Practice Cycle 03

Secure flow with real operational controls

Students and job-seekers practice under practical constraints such as navigation discipline and section transitions used in real screening systems.

Practice Cycle 04

Result intelligence that helps mentors guide candidates

Program mentors can identify pattern-level weaknesses early and support candidates with focused coaching before live interview rounds.

Make In India Vision

Purpose-driven assessment design

Vision 01

Communication-first for BPO and KPO

Strong communication standards directly improve productivity in BPO, KPO, and customer-facing service teams.

Vision 02

Student readiness before interview day

Students should be trained in advance so they can crack interviews and boost career outcomes with confidence.

Vision 03

Four core modules with structured question types

Every test includes reading, writing, listening, and speaking. Each module is designed with multiple question formats.

Vision 04

Native score level with global CEFR bands

Certificate outcomes combine NativeScore level with global levels such as A1, A2, B1, B2, C1, and C2.

Vision 05

Public certificate verification

Each certificate can be verified publicly from the Verify Certificate page for transparent trust.

Operational Insight

How NativeScore improves real-world assessment reliability

Why organizations switch from fragmented test stacks

Teams often run one tool for question delivery, another for proctoring, and a separate system for results or certificates. NativeScore connects these checkpoints so campaign setup, secure attempts, results, and certificate verification stay aligned.

What helps candidates complete attempts without confusion

Stable instruction flow, predictable timers, and secure-entry checks reduce friction. Candidates can focus on language performance instead of browser uncertainty.

How supervisors and HR teams use attempt intelligence

Post-assessment decisions need context, not just numbers. Attempt records, evidence references, and certificate-linked outcomes improve decision confidence across high-volume hiring.

How institutions build long-term readiness programs

Schools and colleges run repeated benchmark cycles to prepare students for real interview workflows. This creates stronger placement readiness and measurable progression.

FAQ

Frequently asked questions

What is an online assessment test for jobs on NativeScore?

NativeScore provides an online assessment test for jobs with reading, writing, listening, speaking, and typing sections under secure campaign controls.

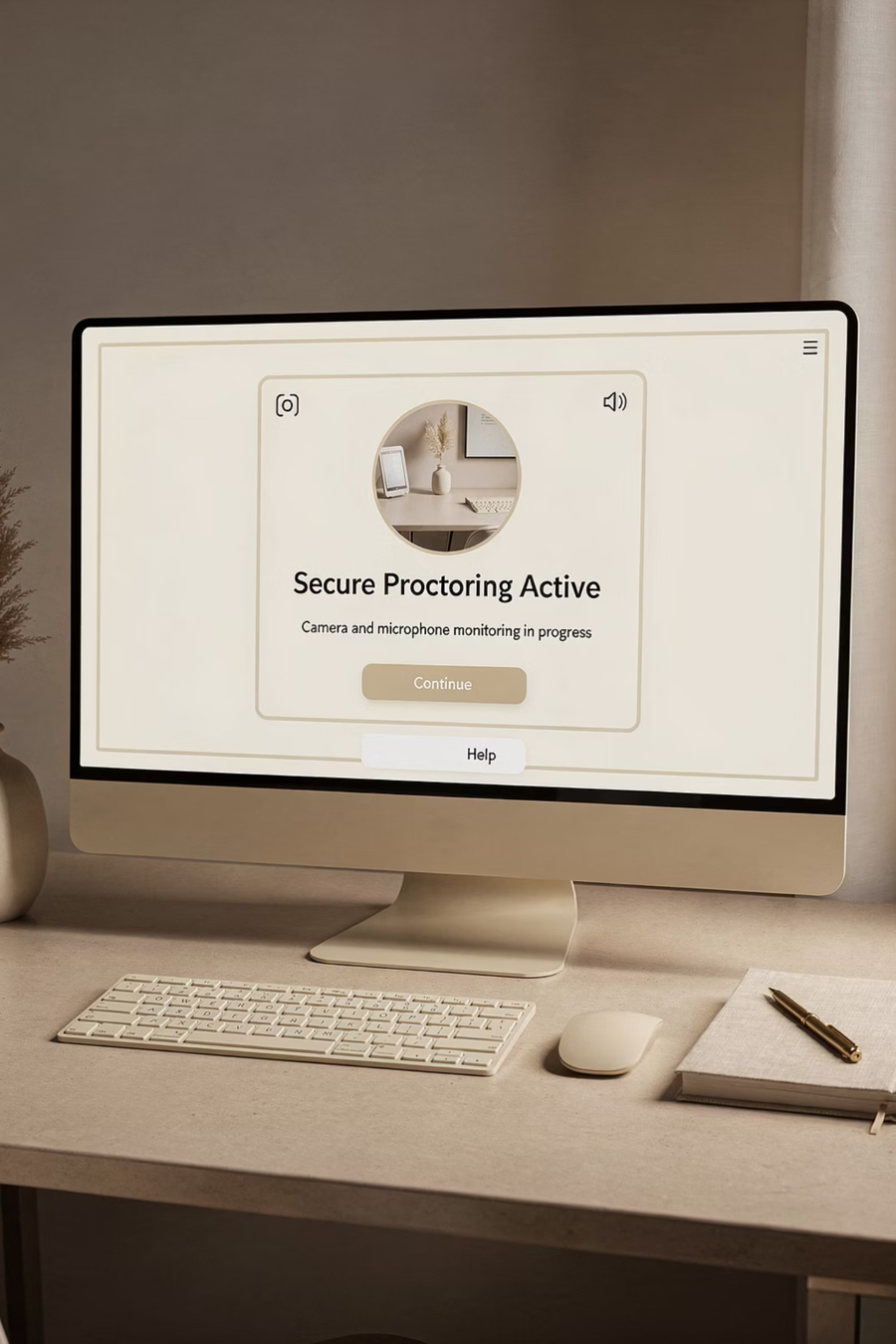

Can NativeScore run a secure hiring assessment test with proctoring?

Yes. Campaigns can enforce fullscreen, screen share, camera and microphone permissions, and record screen/camera/voice artifacts for secure hiring assessments.

Is certificate verification online available publicly?

Yes. Each issued certificate has a unique ID that can be validated from the public Verify Certificate page.

Does NativeScore support language assessment test workflows for English and regional languages?

Yes. Campaign admins can configure regional language assessments in addition to English workflows for hiring and student readiness.

Can BPO and KPO teams use communication skills test and spoken english test workflows?

Yes. Admins, supervisors, and HR teams can run communication and spoken-English focused assessments and review attempt intelligence by role.

Does the platform support typing test with certificate and CEFR levels A1 to C2?

Yes. NativeScore supports typing test with certificate flow and score interpretation aligned to NativeScore level and global CEFR levels from A1 to C2.

Industry Knowledge Base

Practical guides for HR teams, schools, colleges, and multilingual hiring programs

Market Analysis

India hiring-assessment demand snapshot for schools, colleges, and employers

Assessment demand in India is growing quickly across campus placement programs, enterprise hiring teams, and service operations that require communication-ready candidates. Organizations now prefer structured evaluations over resume-only screening because they need consistent and measurable decisions.

Regional adoption is strongest across high-hiring corridors such as Telangana, Andhra Pradesh, Karnataka, Tamil Nadu, Delhi, Maharashtra, and Haryana. Multilingual readiness and certificate transparency are especially important in these markets where hiring velocity and trust requirements are both high.

NativeScore is designed for this operating context with campaign-level controls, clear module structure, and public certificate verification support for institutions and recruiting teams.

Platform Focus

How NativeScore supports practical readiness and trustworthy outcomes

NativeScore focuses on four core modules: reading, writing, listening, and speaking. This gives training teams and hiring teams a balanced view of communication readiness instead of relying on a single score component.

The platform also supports typing-oriented readiness and evidence-backed review workflows for supervisors and HR teams. This helps programs move from one-time testing toward continuous improvement and decision quality.

Public certificate verification provides an additional trust layer so institutions, employers, and candidates can validate outcomes in a simple and transparent way.

Online Assessment Trend

Why secure online language and typing assessments are now a core business requirement

Hiring and education teams are moving from offline screening to digital-first evaluation because recruitment speed, distance learning adoption, and distributed operations demand measurable online outcomes. NativeScore supports this transition by combining writing, listening, reading, speaking, and typing workflows in one environment that is easy to operate but detailed enough for serious decision making.

Organizations no longer need only question delivery. They need test integrity, candidate comparability, role-based review paths, and verifiable credentials that can be trusted by external stakeholders. NativeScore addresses these needs through campaign-level controls, progress tracking, and auditable output structure so that assessments are not one-time forms, but process-ready evaluation systems. This helps HR teams, colleges, and training institutes run scalable screening with better confidence and lower process ambiguity.

From a growth perspective, a platform that aligns to practical workflow outcomes can help institutions attract qualified candidates and partners faster. Clear digital assessment operations reduce failure caused by manual confusion, while structured reporting enables faster decisions in interview pipelines and placement programs.

Special Offer

INR 121 special pricing for schools, colleges, and students who need practical exam exposure

NativeScore includes a special INR 121 track so schools and colleges can give students meaningful hands-on practice on real online assessment behavior. This offer is designed for institutions that want affordable access without compromising the practical value of the test flow. Instead of only theory training, students can experience timed sections, answer discipline, typing pressure, and result-based performance checkpoints that better reflect modern hiring and training expectations.

For student communities, this special plan is useful because many companies run mandatory online pre-HR tests before interview rounds. Candidates who are academically strong often underperform when they face strict timers, typed response constraints, and language-based filters on unfamiliar digital interfaces. With repeated practice under structured conditions, they build confidence and improve first-attempt outcomes in actual recruitment assessments.

Institutions can also position INR 121 as an employability enablement initiative. Placement cells can conduct periodic readiness drives, identify weaknesses in comprehension and typing speed, and run focused improvement cycles backed by measurable score evidence. This makes student support more practical, data-aware, and outcome-focused.

HR Use Case

How HR teams evaluate Hindi, English, and regional language capability before final interviews

NativeScore helps HR and recruitment operations run consistent language screening before manager rounds. Instead of relying only on resume claims and quick telephonic checks, teams can evaluate measurable ability across comprehension, expression, listening interpretation, and typing consistency. This is especially valuable in support operations, sales communication, multilingual service desks, and call center processes where communication quality directly affects customer experience.

A structured pre-HR screen saves interview bandwidth by filtering unprepared candidates early and routing stronger profiles forward with evidence. Recruiters can compare candidates in the same scoring frame and share score snapshots with hiring managers in a clear, process-friendly format. This improves interview quality and reduces cost per qualified shortlist because candidate capability is validated before high-value interview time is consumed.

For organizations managing diverse regions, multilingual screening can be mapped campaign-wise, ensuring each role is evaluated in the required language context. NativeScore supports this operational model without forcing teams to maintain separate disconnected systems for each language path.

Government and Public-Service Style Hiring

Regional language readiness support for high-volume service recruitment contexts

Government-facing and public-service style recruitment workflows often require candidates with practical language control in regional contexts. NativeScore can support these requirements through configurable campaign setup and multilingual evaluation paths. Teams can run structured Hindi, English, and regional-language assessments with consistent scoring logic to improve selection quality in large service environments.

Third-party assessment support becomes important when hiring volume is high and fairness expectations are strict. NativeScore contributes by providing transparent process structure, campaign governance controls, and verifiable certificate outputs that can be validated independently. This combination helps build trust in screening quality while reducing dependence on manual evaluation variability.

Where call center and citizen-support processes need regional fluency, NativeScore can be used to benchmark candidate readiness before onboarding. This protects service quality and ensures language fit is validated in measurable terms rather than assumed from self-declaration.

Case Study

Campus fresher readiness case: preparing candidates for MNC typing tests and online pre-HR assessments

In a common placement scenario, students completed college with strong academic performance but limited familiarity with online screening tools used by large employers. Many faced typing tests and communication assessments before HR rounds and were eliminated due to interface pressure, timing stress, and low confidence rather than lack of potential. NativeScore was adopted as a practical readiness layer to bridge this gap.

The placement team ran repeat mock cycles with targeted feedback for typing speed, reading focus, listening retention, and response quality. Because each attempt produced structured score data, mentors could coach students with evidence instead of generic advice. Over multiple cycles, candidates reported stronger comfort with timed exam conditions and better execution in employer-facing online rounds.

This case pattern shows why practical digital exposure matters for freshers. NativeScore transforms readiness from one workshop to a measurable progression system, helping institutions reduce avoidable failure in pre-HR screening pipelines and improve candidate confidence during campus hiring seasons.

Distance Learning

How schools and colleges use online assessments to prepare students for digital-first careers

Distance learning and hybrid programs have made digital assessment behavior a foundational skill. Students now need to operate confidently in secure online environments, complete timed tasks, and submit high-quality responses without classroom dependency. NativeScore helps institutions train this behavior through realistic practice campaigns that mirror modern exam and hiring conditions.

Colleges can integrate assessments into placement readiness calendars, language labs, and employability modules so students gain repeated familiarity with real platform workflows. This includes typing discipline, comprehension under time constraints, and response quality tracking. The result is not only score improvement but also reduced anxiety when students face mandatory online tests from recruiters and corporate hiring partners.

For schools, early exposure to guided digital assessments creates long-term benefits in confidence, self-evaluation, and communication skill development. Institutions can use this model to support both academic progression and future workforce preparedness.

Typing Assessment Need

Typing tests as mandatory filters in BPO, support, and data-driven job roles

Many large employers include typing and online language screens before HR conversations, especially in roles that require real-time communication, ticket handling, and service documentation. NativeScore aligns with this hiring reality by offering practical typing and language capability checks that can be repeated for improvement and benchmarked for eligibility decisions.

Students and candidates often underestimate typing as a selection criterion until they encounter actual screening rounds. With NativeScore, institutions and training partners can build targeted preparation programs where participants practice speed, accuracy, and comprehension together, not in isolation. This integrated approach is more aligned with real recruitment tools than standalone typing games.

When typing outcomes are tied to broader language assessments, organizations get a stronger capability picture. Recruiters can identify candidates who not only type fast but also understand instructions, process input correctly, and respond in role-relevant language standards.

Supervisor and Admin Intelligence

Operational visibility for campaign owners, reviewers, and compliance teams

NativeScore gives operational stakeholders practical control through role-based dashboards. Admin users can configure campaigns, supervisors can review attempt intelligence, and HR teams can evaluate candidate outcomes based on authorized scope. This design supports accountability in environments where multiple teams participate in screening decisions.

Visibility is especially important when organizations run multilingual or high-volume assessments. Decision makers need a clear view of status, progress, and quality indicators without navigating disconnected systems. NativeScore centralizes this workflow so review efficiency improves while process integrity remains intact.

For institutions and enterprises, this governance model supports repeatable execution. Teams can standardize workflows, reduce operational confusion, and maintain quality as assessment scale increases across departments or campuses.

Certificate Trust

Public verification and long-term value of digital language credentials

Certification is valuable only when it can be trusted by third parties. NativeScore includes certificate generation with public verification so employers, institutions, and partners can validate credential authenticity using a unique ID. This supports credibility in hiring and learning ecosystems where proof of skill must be checked quickly and reliably.

Public verification also helps candidates because they can share credentials with confidence across opportunities without repeated manual explanations. Recruiters and reviewers can validate results independently and proceed with decision workflows faster. This reduces friction between candidate achievement and employer trust.

In long-term operations, certificate verification becomes a durable quality signal for the platform itself. It demonstrates process transparency and improves confidence in assessment outcomes beyond the moment of test completion.

Multilingual Capability

Hindi, English, and regional language evaluation under one controlled framework

Language readiness varies by role and geography. NativeScore supports multilingual configuration so campaigns can evaluate candidates in Hindi, English, and regional languages according to job context. This flexibility is critical for organizations hiring across markets where language fit directly influences service performance.

Regional language assessment is increasingly important in customer support and distributed service processes. NativeScore helps organizations validate communication quality before onboarding, reducing downstream service risk and training overhead. Institutions can also use this model to prepare students for region-specific opportunities with better confidence.

Running all language paths in one framework improves governance and reporting consistency. Teams avoid fragmented tools and maintain a standard process for scoring, review, and certificate validation across language tracks.

Implementation Roadmap

From pilot to scale: practical rollout steps for institutions and employers

Most successful deployments follow a phased model: start with one campaign, validate score reliability, train operational users, and scale based on outcome confidence. NativeScore supports this progression through campaign-level controls that can be expanded without redesigning the entire workflow. This lowers implementation risk and accelerates adoption.

During pilot phase, teams can focus on one high-impact use case such as pre-HR language screening or placement typing readiness. Once baseline effectiveness is proven, additional role tracks, language paths, and review policies can be introduced in a controlled manner. This keeps expansion predictable and measurable.

A phased roadmap is also budget-friendly because organizations can align license growth with demonstrated value. NativeScore can therefore support both early-stage adoption and enterprise-scale expansion while maintaining process continuity.

Custom Tooling by License Size

Requirement-based customization for high-volume assessment programs

When candidate volume grows, organizations often need custom workflow behavior: role-specific campaign paths, reporting depth changes, governance rules, and specialized process alignment. NativeScore can support requirement-based configuration linked to license scale so customers can move beyond generic setups and operate with better strategic fit.

This model is useful for large campus networks, enterprise hiring programs, and multilingual service operations where one-template logic is not enough. By mapping configuration to license and process size, NativeScore enables practical customization without losing platform consistency.

Custom tooling can include content strategy alignment, review queues, certificate governance, and language-specific deployment controls. The result is a scalable assessment system designed around real operational outcomes rather than isolated test execution.